Anthropic said "No" to the Department of War: What does this mean for AI safety?

The Brief: On the end of lab-led AI safety

The Situation: Anthropic said No

This episode marks the securitisation of AI safety. Once defined as strategic infrastructure, safety constraints are subordinated to national-security interpretation. That shift is difficult to reverse.

I. Anthropic rejects the DoW ultimatum

II. Anthropic ties AI safety to the industry standard

III. Influence of the executive branch of the US government on AI safety grows

The Red Line: Where does AI safety go from here

I. Timeline: Anthropic rejects the Department of War ultimatum

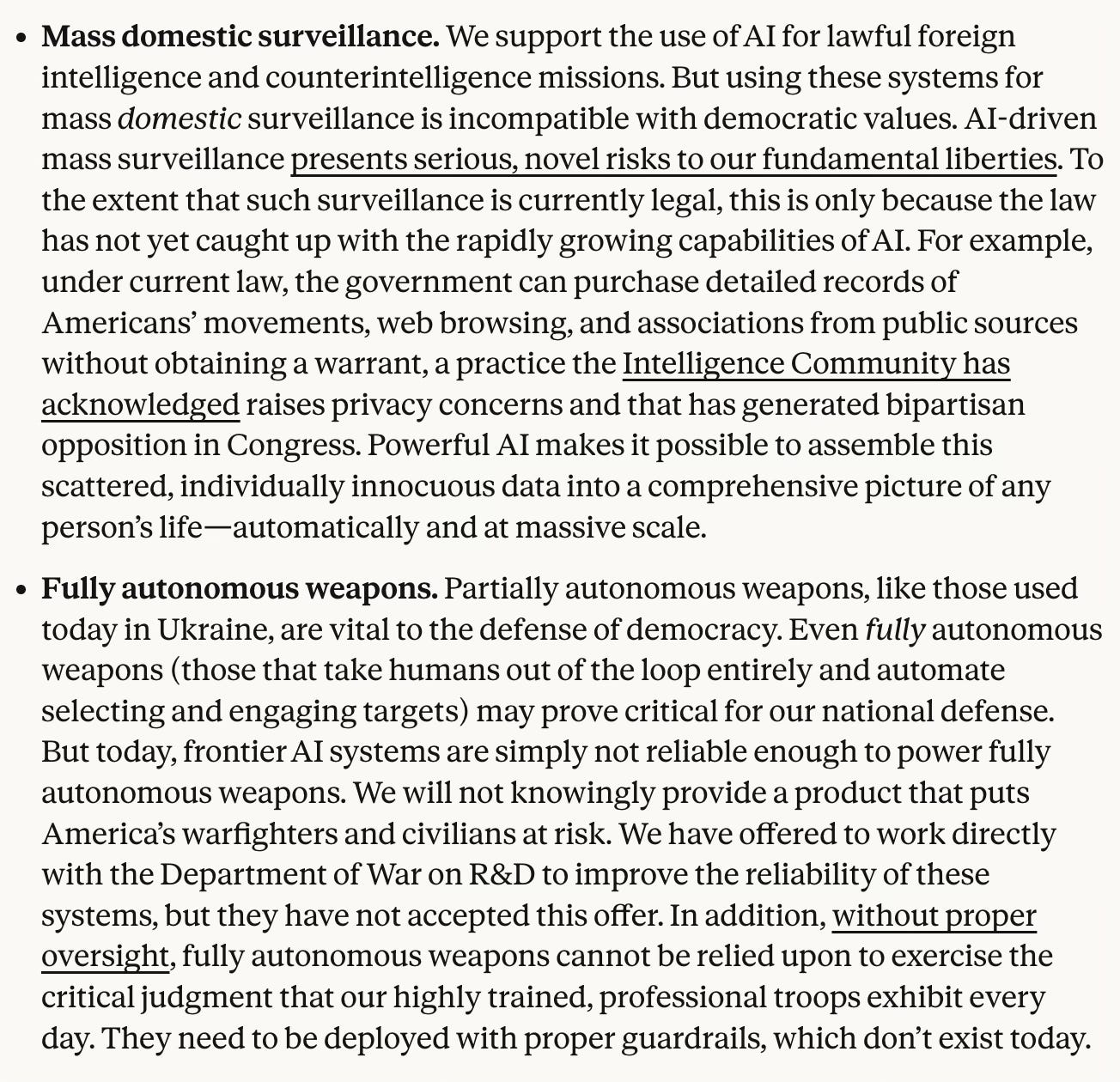

Feb 24: Anthropic publishes RSP v3.0

Three days before the standoff, Anthropic moved its safety standards from independent to floating. Safety commitments are now pegged to competitors.

From the RSP v3.0: “The distinction between our plans as a company and our industry-wide recommendations reflects the limitations of any single AI developer’s ability to ensure safety across the industry. In particular, we cannot unilaterally and unconditionally commit to staying in line with the industry-wide recommendations.”

The document received little public attention at the time.

Feb 25: Ultimatum issued

After a private meeting at the White House, the US secretary of war, Pete Hegseth, gives Anthropic’s chief executive, Dario Amodei, a 5.01 pm Friday deadline to provide the Department of War unrestricted military use of its AI technology. This includes removing Anthropic’s two red lines on fully autonomous lethal weapons and mass surveillance. Amodei is told that refusal could trigger the Defence Production Act, designating Anthropic a supply chain risk, a status typically associated with adversaries of the US.

Feb 26: Anthropic publishes a statement

“Regardless, these threats do not change our position: we cannot in good conscience accede to their request.”

Anthropic says it was the first frontier AI company to deploy models in US government classified networks and that Claude is widely deployed across the Department of War and other national security agencies for mission critical applications, including intelligence analysis, modelling and simulation, operational planning and cyber operations.

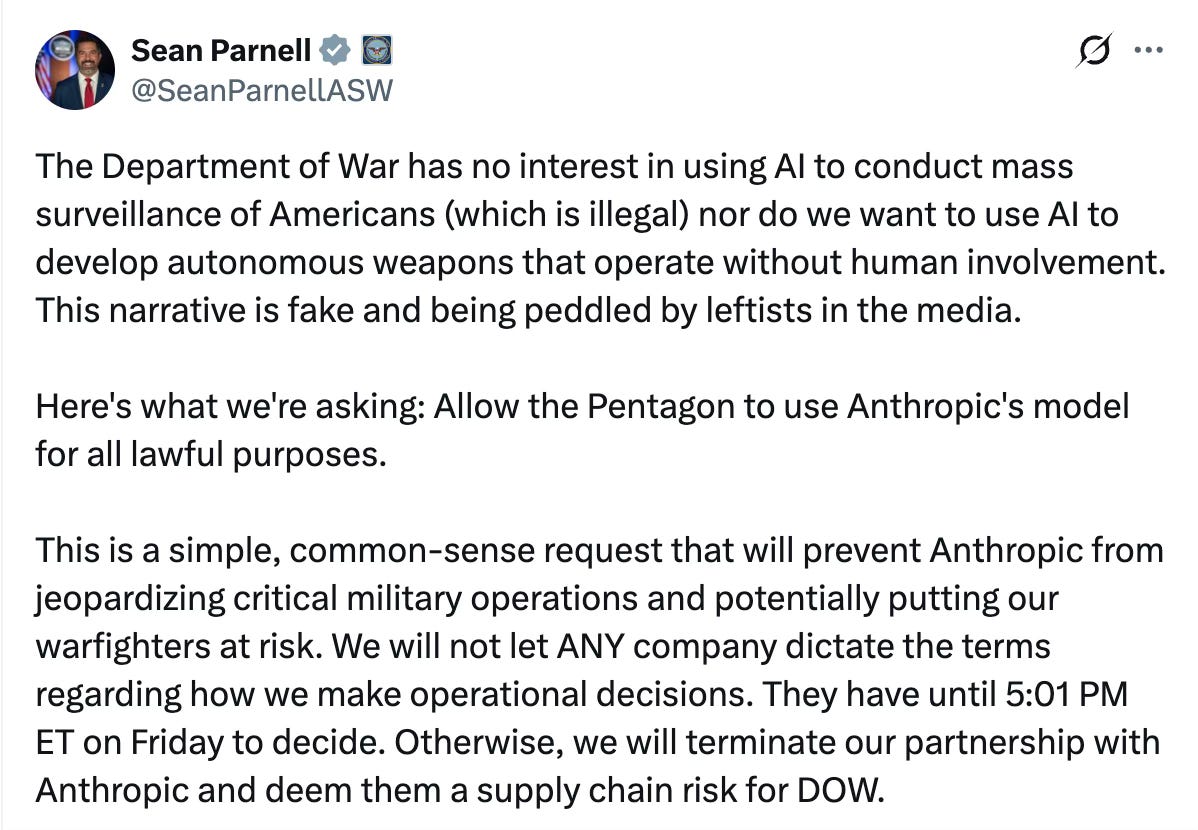

The Department of War rejects this framing. The Department had previously accepted Anthropic’s usage policy. The immediate trigger appears to be the January AI strategy memo directing Defence Department contracts to incorporate “any lawful use” language within 180 days. In that context, Anthropic’s refusal becomes an enforcement event for a policy decision already taken.

Feb 26: OpenAI internal memo leaks

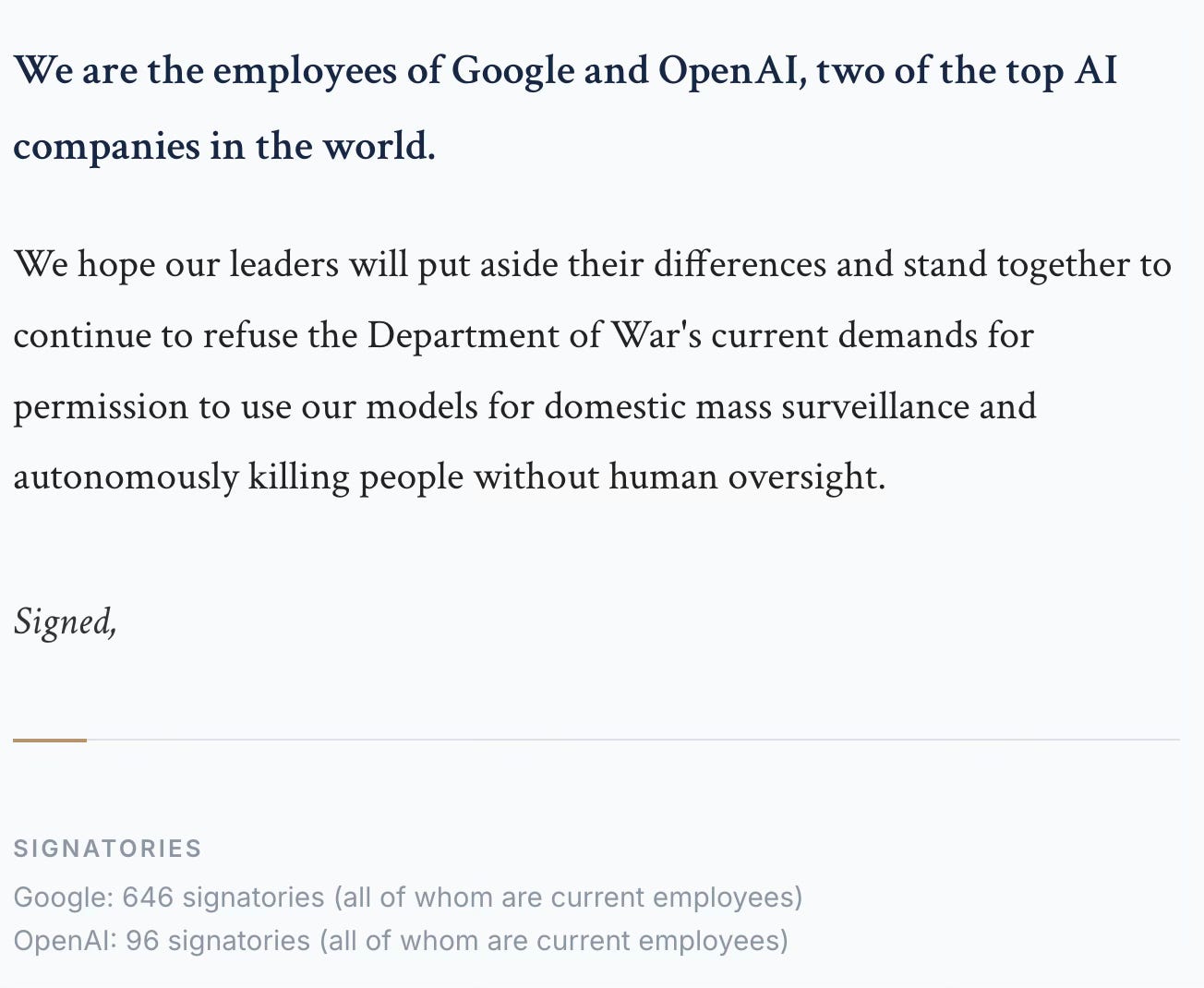

Sam Altman writes in a leaked memo that OpenAI shares Anthropic’s red lines, while confirming ongoing negotiations with the Department of War. Ninety six OpenAI employees and 646 Google employees sign an open letter.

Feb 27 ~5pm: Deadline passes without a deal

Feb 27: Hegseth designates Anthropic a “supply chain risk”

“In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service.

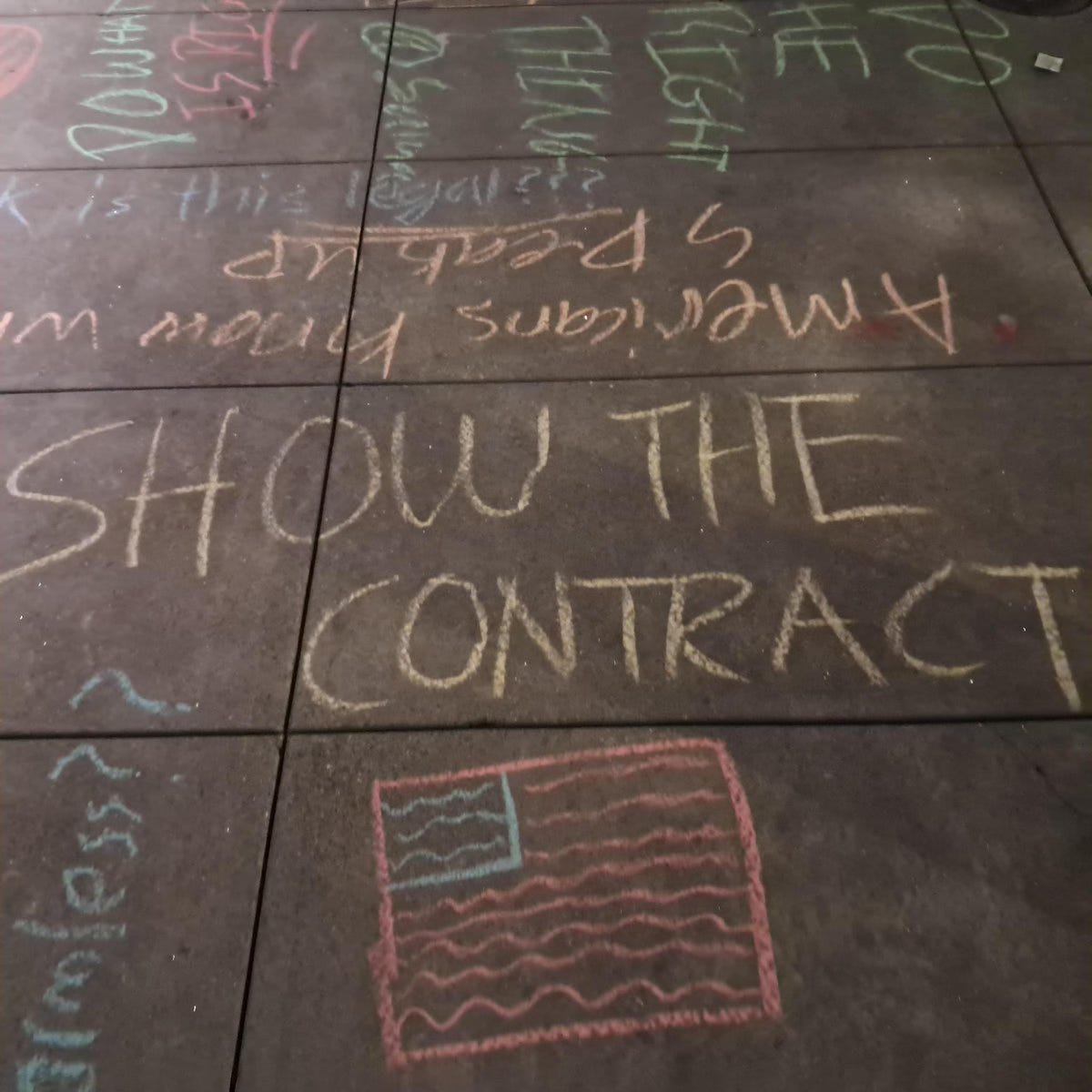

America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.”

Hegseth’s statement frames safety constraints as ideological rather than technical or legal. That framing matters because it declines to engage Anthropic’s position on its own terms.

The commercial consequence is direct. The statement prohibits contractors, suppliers or partners that do business with the US military from conducting commercial activity with Anthropic. Anthropic was reportedly tracking towards revenue parity with OpenAI, and that trajectory, including an eventual IPO pathway, becomes less certain under a de facto ecosystem restriction.

The designation also formalises an emerging reality. The US government, not frontier labs, sets the operative terms of national security deployment. The more relevant question for AI safety frameworks is whether they still assume labs are the primary unit of governance.

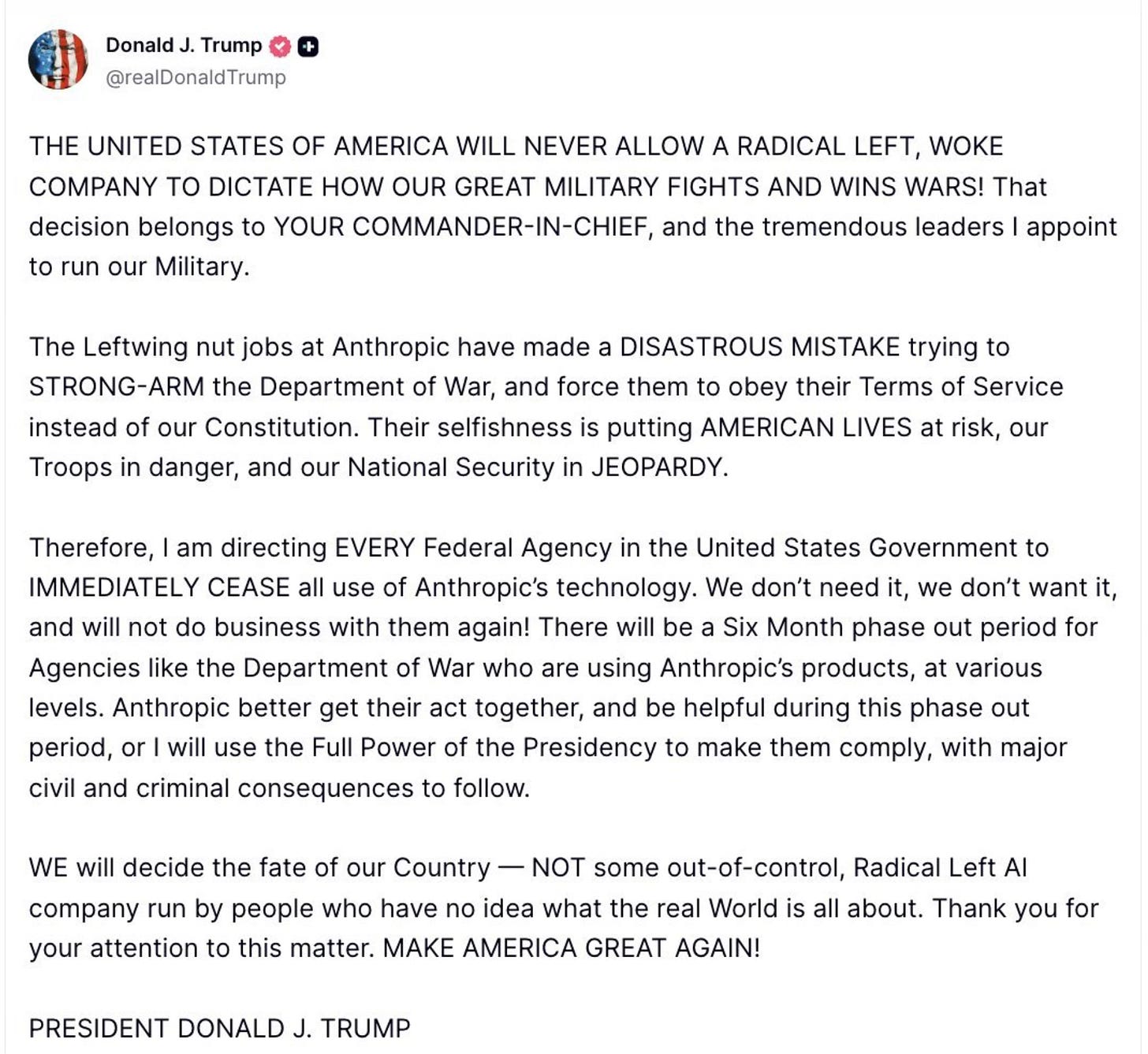

Feb 27 night: OpenAI deal with DoW

Altman writes “tonight, we reached an agreement with the Department of War”.

OpenAI publishes the following clause

“The Department of War may use the AI System for all lawful purposes, consistent with applicable law, operational requirements, and well-established safety and oversight protocols. The AI System will not be used to independently direct autonomous weapons in any case where law, regulation, or Department policy requires human control, nor will it be used to assume other high-stakes decisions that require approval by a human decisionmaker under the same authorities. Per DoD Directive 3000.09 (dtd 25 January 2023), any use of AI in autonomous and semi-autonomous systems must undergo rigorous verification, validation, and testing to ensure they perform as intended in realistic environments before deployment.

For intelligence activities, any handling of private information will comply with the Fourth Amendment, the National Security Act of 1947 and the Foreign Intelligence and Surveillance Act of 1978, Executive Order 12333, and applicable DoD directives requiring a defined foreign intelligence purpose. The AI System shall not be used for unconstrained monitoring of U.S. persons’ private information as consistent with these authorities. The system shall also not be used for domestic law-enforcement activities except as permitted by the Posse Comitatus Act and other applicable law.”

The critical phrase is "where law, regulation, or Department policy requires human control." It means the Department of War retains discretion to define when its own policy requires human control. The clause defers to internal policy rather than creating a constraint external to the Department. By week’s end, neither CEO retained unilateral control over how their systems would be deployed in national-security contexts. The question is not whether Altman or Amodei acted in good faith. It is whether good faith is the relevant variable.

Feb 27 night: Anthropic announces lawsuit

“No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons. We will challenge any supply chain risk designation in court.”

II. Anthropic ties AI safety to the industry standard

By tying its safety commitments to industry baselines, Anthropic implicitly accepted that those baselines could shift under political pressure. That is what happened this week.

OpenAI’s deal states the same red lines Anthropic sought: no mass domestic surveillance, no autonomous weapons direction, and no high stakes automated decisions. However, the enforcement mechanism is internal to OpenAI and the Department of War. It relies on cloud deployment, vendor controls and policy compliance rather than an external, independently enforceable constraint.

At capability parity, the safety standard is set by whoever deploys fastest with the fewest restrictions. The floor is defined by the most compliant actor. And Anthropic’s new RSP now benchmarks to that standard.

The OpenAI contract doesn't resolve the question Anthropic raised. It dissolves it. Anthropic's argument was that existing law has not caught up with AI. That mass surveillance can be conducted legally using publicly available data aggregated at AI scale, in ways the Fourth Amendment does not yet prohibit. OpenAI's contract says it will comply with the Fourth Amendment. That's not an answer to Anthropic's concern. It's a restatement of the question.

III. Influence of the executive branch of the US government on AI safety grows

The administration has demonstrated both the will and legal mechanism to enforce AI procurement terms on domestic companies. The DPA based supply chain risk designation, applied to a domestic firm declining to modify its own product, is without direct precedent. Its legal durability is untested, but its deterrent effect is immediate. Other frontier labs will surely have received the message.

The key question is not whether the executive branch can set military procurement terms. It can. The key question is whether the executive branch has the institutional capacity to assess the risks of what it is authorising on the timelines it is demanding. The January memo reportedly mandates deployment of leading models within 30 days of public release. In that model, the safety assessment is performed by the customer about a product it has already decided to buy.

The lab centric model of AI safety assumed labs would be the primary red line backstop. This week suggests frontier labs either accept government terms or lose contracts, revenue and classified access.

To date, Congress has not produced binding AI safety legislation. In the absence of statute, procurement contracts become the primairy governance instrument.

The Signal: The AI safety shift

The standoff clarifies where AI safety actually resides: in statute, in courts, or in executive policy. Currently, only one of those three has operational enforcement weight.

Scenario 1: Congressional intervention

What this looks like: Bipartisan legislation defining non-negotiable AI constraints and curtailing executive discretion.

Why this has low-probability: Congress failed twice to pass a moratorium on state AI laws. The bipartisan resistance (99 votes to 1 in the Senate) is sometimes read as evidence that AI safety has cross-party support. It is more accurately read as resistance to federal preemption of state authority. When the moratorium failed legislatively, the administration moved to achieve the same outcome through executive order and DOJ litigation. Deregulation and executive control are not competing impulses here. They are the same impulse. The pattern is consistent: reduce the number of actors with enforceable authority over AI, and concentrate what remains in the executive branch. A governance field cleared of state law, congressional statute, and lab-defined constraints leaves one remaining authority — the one issuing the procurement contracts.

Scenario 2: Court constrains executive overreach

What this looks like: Anthropic wins in court, the supply chain designation is narrowed, and frontier labs regain partial autonomy.

Why this has medium-probability: The legal question is novel. Can the executive branch designate a domestic company a national security risk for declining to modify its own product? While the case moves through courts, every other frontier lab is deploying under the terms Anthropic refused. The legal remedy and the competitive consequence run on different clocks.

Scenario 3: Executive dominance consolidates

What this looks like: All frontier labs accept “lawful use” clauses, safety becomes national-security aligned and corporate-governance RSP frameworks become symbolic.

Why this has high-probability: xAI is the only frontier lab that bid on the Pentagon's autonomous drone software contest. OpenAI explicitly excluded drone control, weapon integration, and target selection from its own bid. The "lawful use" clause lands differently depending on who signed it. This is the base case. While Anthropic enters a six-month wind-down period, OpenAI and xAI hold active contracts and Google DeepMind negotiates its position. The leading indicator is Google DeepMind.

AI safety research can no longer treat the lab as the primary unit of analysis. The relevant actors are now the executive branch (sets permissibility), procurement contracts (operationalise it), and courts (may constrain it). Technical governance that ignores this triangle will misread the field.